Journal Description

Mathematics

Mathematics

is a peer-reviewed, open access journal which provides an advanced forum for studies related to mathematics, and is published semimonthly online by MDPI. The European Society for Fuzzy Logic and Technology (EUSFLAT) and International Society for the Study of Information (IS4SI) are affiliated with Mathematics and their members receive a discount on article processing charges.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, SCIE (Web of Science), RePEc, and other databases.

- Journal Rank: JCR - Q1 (Mathematics) / CiteScore - Q1 (General Mathematics)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 16.9 days after submission; acceptance to publication is undertaken in 2.6 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- Sections: published in 13 topical sections.

- Companion journals for Mathematics include: Foundations, AppliedMath, Analytics, International Journal of Topology, Geometry and Logics.

Impact Factor:

2.4 (2022);

5-Year Impact Factor:

2.3 (2022)

Latest Articles

On α-Pseudo Spiralike Functions Associated with Exponential Pareto Distribution (EPD) and Libera Integral Operator

Mathematics 2024, 12(9), 1305; https://doi.org/10.3390/math12091305 (registering DOI) - 25 Apr 2024

Abstract

The present study aims at investigating some characterizations of a new subclass

The present study aims at investigating some characterizations of a new subclass

(This article belongs to the Special Issue New Trends in Complex Analysis Research, 2nd Edition)

Open AccessArticle

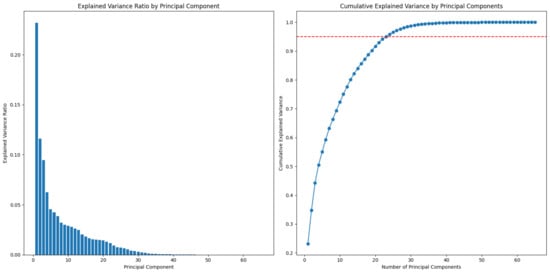

Sparsity-Constrained Vector Autoregressive Moving Average Models for Anomaly Detection of Complex Systems with Multisensory Signals

by

Meng Ma, Zhongyi Zhang, Zhi Zhai and Zhirong Zhong

Mathematics 2024, 12(9), 1304; https://doi.org/10.3390/math12091304 (registering DOI) - 25 Apr 2024

Abstract

Detecting anomalies in large, complex systems is a critical and challenging task, and this is especially true for high-dimensional anomaly detection due to the underlying dependency structures among sensors. To incorporate the interrelationships among various sensors, a novel sparsity-constrained vector autoregressive moving average

[...] Read more.

Detecting anomalies in large, complex systems is a critical and challenging task, and this is especially true for high-dimensional anomaly detection due to the underlying dependency structures among sensors. To incorporate the interrelationships among various sensors, a novel sparsity-constrained vector autoregressive moving average (scVARMA) model is proposed for anomaly detection in complex systems with multisensory signals. This model aims to leverage the inherent relationships and dynamics among various sensor readings, providing a more comprehensive and accurate analysis suitable for complex systems’ complex behavior. This research uses convex optimization to search for a parameterization that is sparse based on the principal of parsimony. This sparse model will not only contribute to meeting the real-time requirements of online monitoring strategies but also keeps the correlations among different sensory signals. The performance of the proposed scVARMA model is validated using real-world data from complex systems. The results affirm the superiority of the proposed scheme, demonstrating its enhanced performance and potential in practical applications.

Full article

(This article belongs to the Special Issue Functional Statistics: Outliers Detection and Quality Control, 2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

TPTM-HANN-GA: A Novel Hyperparameter Optimization Framework Integrating the Taguchi Method, an Artificial Neural Network, and a Genetic Algorithm for the Precise Prediction of Cardiovascular Disease Risk

by

Chia-Ming Lin and Yu-Shiang Lin

Mathematics 2024, 12(9), 1303; https://doi.org/10.3390/math12091303 (registering DOI) - 25 Apr 2024

Abstract

The timely and precise prediction of cardiovascular disease (CVD) risk is essential for effective prevention and intervention. This study proposes a novel framework that integrates the two-phase Taguchi method (TPTM), the hyperparameter artificial neural network (HANN), and a genetic algorithm (GA) called TPTM-HANN-GA.

[...] Read more.

The timely and precise prediction of cardiovascular disease (CVD) risk is essential for effective prevention and intervention. This study proposes a novel framework that integrates the two-phase Taguchi method (TPTM), the hyperparameter artificial neural network (HANN), and a genetic algorithm (GA) called TPTM-HANN-GA. This framework efficiently optimizes hyperparameters for an artificial neural network (ANN) model during the training stage, significantly enhancing prediction accuracy for cardiovascular disease (CVD) risk. The proposed TPTM-HANN-GA framework requires far fewer experiments than a traditional grid search, making it highly suitable for application in resource-constrained, low-power computers, and edge artificial intelligence (edge AI) devices. Furthermore, the proposed TPTM-HANN-GA framework successfully identified the optimal configurations for the ANN model’s hyperparameters, resulting in a hidden layer of 4 nodes, a tan h activation function, an SGD optimizer, a learning rate of 0.23425849, a momentum rate of 0.75462782, and seven hidden nodes. This optimized ANN model achieves 74.25% accuracy in predicting the risk of cardiovascular disease, which exceeds the existing state-of-the-art GA-ANN and TSTO-ANN models. The proposed TPTM-HANN-GA framework enables personalized CVD prediction to be efficiently conducted on low-power computers and edge-AI devices, achieving the goal of point-of-care testing (POCT) and empowering individuals to manage their heart health effectively.

Full article

Open AccessArticle

Single and Multi-Valued Ordered-Theoretic Perov Fixed-Point Results for θ-Contraction with Application to Nonlinear System of Matrix Equations

by

Fahim Ud Din, Salha Alshaikey, Umar Ishtiaq, Muhammad Din and Salvatore Sessa

Mathematics 2024, 12(9), 1302; https://doi.org/10.3390/math12091302 (registering DOI) - 25 Apr 2024

Abstract

This paper combines the concept of an arbitrary binary connection with the widely recognized principle of

This paper combines the concept of an arbitrary binary connection with the widely recognized principle of

Open AccessArticle

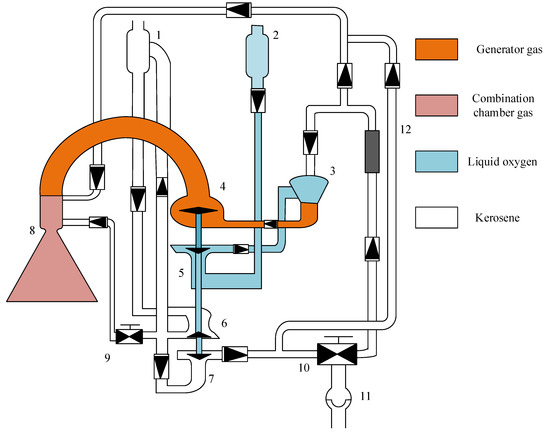

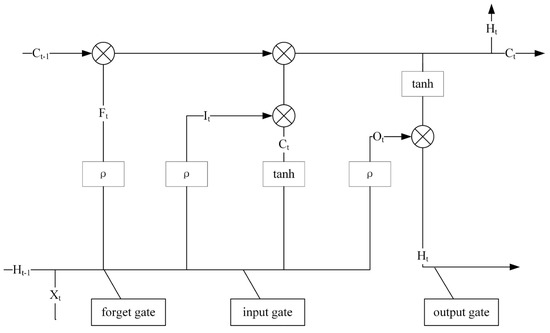

Synergising an Advanced Optimisation Technique with Deep Learning: A Novel Method in Fault Warning Systems

by

Jia Tian, Xingqin Zhang, Shuangqing Zheng, Zhiyong Liu and Changshu Zhan

Mathematics 2024, 12(9), 1301; https://doi.org/10.3390/math12091301 (registering DOI) - 25 Apr 2024

Abstract

In the realm of automated industry and smart production, the deployment of fault warning systems is crucial for ensuring equipment reliability and enhancing operational efficiency. Although there are a multitude of existing methodologies for fault warning, the proficiency of these systems in processing

[...] Read more.

In the realm of automated industry and smart production, the deployment of fault warning systems is crucial for ensuring equipment reliability and enhancing operational efficiency. Although there are a multitude of existing methodologies for fault warning, the proficiency of these systems in processing and analysing data is increasingly challenged by the progression of industrial apparatus and the escalating magnitude and intricacy of the data involved. To address these challenges, this research outlines an innovative fault warning methodology that combines a bi-directional long short-term memory (Bi-LSTM) network with an enhanced hunter–prey optimisation (EHPO) algorithm. The Bi-LSTM network is strategically utilised to outline complex temporal patterns in machinery operational data, while the EHPO algorithm is employed to meticulously fine-tune the hyperparameters of the Bi-LSTM, aiming to enhance the accuracy and generalisability of fault warning. The EHPO algorithm, building upon the foundational hunter–prey optimisation (HPO) framework, introduces an advanced population initialisation process, integrates a range of strategic exploration methodologies, and strengthens its search paradigms through the incorporation of the differential evolution (DE) algorithm. This comprehensive enhancement aims to boost the global search efficiency and accelerate the convergence speed of the algorithm. Empirical analyses, conducted using datasets from real-world industrial scenarios, have validated the improved warning performance of this proposed methodology against some benchmark techniques, as evidenced by superior metrics such as root mean square error (RMSE) and mean absolute error (MAE), albeit with a slight increase in computational resource requirements. This study not only proposes a novel paradigm for fault warning within complex industrial frameworks but also contributes to the discourse on hyperparameter optimisation within the field of machine learning algorithms.

Full article

(This article belongs to the Special Issue Computational Intelligence and Machine Learning with Applications)

Open AccessArticle

Estimating the Complier Average Causal Effect with Non-Ignorable Missing Outcomes Using Likelihood Analysis

by

Jierui Du, Gao Wen and Xin Liang

Mathematics 2024, 12(9), 1300; https://doi.org/10.3390/math12091300 (registering DOI) - 25 Apr 2024

Abstract

Missing data problems arise in randomized trials, which complicates the inference of causal effects if the missing mechanism is non-ignorable. We tackle the challenge of identifying and estimating the complier average causal effect parameters under non-ignorable missingness by increasing the covariates to mitigate

[...] Read more.

Missing data problems arise in randomized trials, which complicates the inference of causal effects if the missing mechanism is non-ignorable. We tackle the challenge of identifying and estimating the complier average causal effect parameters under non-ignorable missingness by increasing the covariates to mitigate the sensitivity to the violation of specific identification assumptions. The missing data mechanism is assumed to follow a logistic model, wherein the absence of the outcome is explained by the outcome itself, the treatment received, and the covariates. We establish the identifiability of the models under mild conditions by assuming that the outcome follows a normal distribution. We develop a computational method to estimate model parameters through a two-step likelihood estimation approach, employing subgroup analysis. The bootstrap method is employed for variance estimation, and the effectiveness of our approach is confirmed through simulation. We applied the proposed method to analyze the household income dataset from the Chinese Household Income Project Survey 2013.

Full article

(This article belongs to the Special Issue Advances in Statistical AI and Casual Inference)

Open AccessArticle

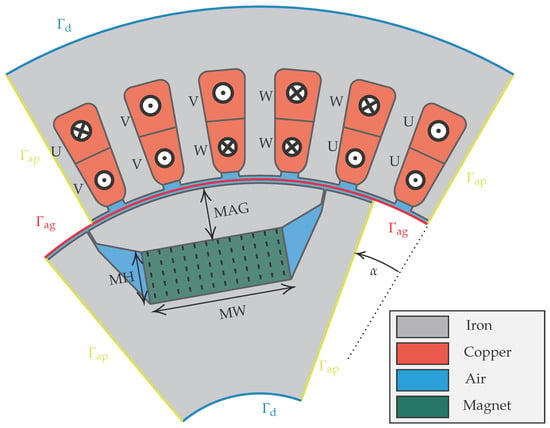

Robust Design Optimization of Electric Machines with Isogeometric Analysis

by

Theodor Komann, Michael Wiesheu, Stefan Ulbrich and Sebastian Schöps

Mathematics 2024, 12(9), 1299; https://doi.org/10.3390/math12091299 (registering DOI) - 25 Apr 2024

Abstract

In electric machine design, efficient methods for the optimization of the geometry and associated parameters are essential. Nowadays, it is necessary to address the uncertainty caused by manufacturing or material tolerances. This work presents a robust optimization strategy to address uncertainty in the

[...] Read more.

In electric machine design, efficient methods for the optimization of the geometry and associated parameters are essential. Nowadays, it is necessary to address the uncertainty caused by manufacturing or material tolerances. This work presents a robust optimization strategy to address uncertainty in the design of a three-phase, six-pole permanent magnet synchronous motor (PMSM). The geometry is constructed in a two-dimensional framework within MATLAB®, employing isogeometric analysis (IGA) to enable flexible shape optimization. The main contributions of this research are twofold. First, we integrate shape optimization with parameter optimization to enhance the performance of PMSM designs. Second, we use robust optimization, which creates a min–max problem, to ensure that the motor maintains its performance when facing uncertainties. To solve this bilevel problem, we work with the maximal value functions of the lower-level maximization problems and apply a version of Danskin’s theorem for the computation of generalized derivatives. Additionally, the adjoint method is employed to efficiently solve the lower-level problems with gradient-based optimization. The paper concludes by presenting numerical results showcasing the efficacy of the proposed robust optimization framework. The results indicate that the optimized PMSM designs not only perform competitively compared to their non-robust counterparts but also show resilience to operational and manufacturing uncertainties, making them attractive for industrial applications.

Full article

(This article belongs to the Special Issue Numerical Optimization for Electromagnetic Problems)

►▼

Show Figures

Figure 1

Open AccessArticle

Mining Trajectory Planning of Unmanned Excavator Based on Machine Learning

by

Zhong Jin, Mingde Gong, Dingxuan Zhao, Shaomeng Luo, Guowang Li, Jiaheng Li, Yue Zhang and Wenbin Liu

Mathematics 2024, 12(9), 1298; https://doi.org/10.3390/math12091298 (registering DOI) - 25 Apr 2024

Abstract

►▼

Show Figures

Trajectory planning plays a crucial role in achieving unmanned excavator operations. The quality of trajectory planning results heavily relies on the level of rules extracted from objects such as scenes and optimization objectives, using traditional theoretical methods. To address this issue, this study

[...] Read more.

Trajectory planning plays a crucial role in achieving unmanned excavator operations. The quality of trajectory planning results heavily relies on the level of rules extracted from objects such as scenes and optimization objectives, using traditional theoretical methods. To address this issue, this study focuses on professional operators and employs machine learning methods for job trajectory planning, thereby obtaining planned trajectories which exhibit excellent characteristics similar to those of professional operators. Under typical working conditions, data collection and analysis are conducted on the job trajectories of professional operators, with key points being extracted. Machine learning is then utilized to train models under different parameters in order to obtain the optimal model. To ensure sufficient samples for machine learning training, the bootstrap method is employed to adequately expand the sample size. Compared with the traditional spline curve method, the trajectories generated by machine learning models reduce the maximum speeds of excavator boom arm, dipper stick, bucket, and swing joint by 8.64 deg/s, 10.24 deg/s, 18.33 deg/s, and 1.6 deg/s, respectively; moreover, the error does not exceed 2.99 deg when compared with curves drawn by professional operators; and, finally, the trajectories generated by this model are continuously differentiable without position or velocity discontinuities, and their overall performance surpasses that of those generated by the traditional spline curve method. This paper proposes a trajectory generation method that combines excellent operators with machine learning and establishes a machine learning-based trajectory-planning model that eliminates the need for manually establishing complex rules. It is applicable to motion path planning in various working conditions of unmanned excavators.

Full article

Figure 1

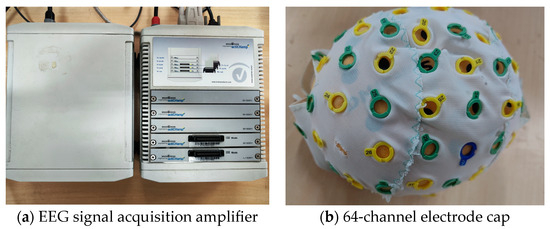

Open AccessArticle

Exploring an Intelligent Classification Model for the Recognition of Automobile Sounds Based on EEG Physiological Signals

by

Jingjing Guo, Tao Xu, Liping Xie and Zhien Liu

Mathematics 2024, 12(9), 1297; https://doi.org/10.3390/math12091297 - 25 Apr 2024

Abstract

►▼

Show Figures

The advancement of an intelligent automobile sound switching system has the potential to elevate the market standing of automotive products, with the pivotal prerequisite being the selection of automobile sounds based on the driver’s subjective perception. The subjective responses of diverse individuals to

[...] Read more.

The advancement of an intelligent automobile sound switching system has the potential to elevate the market standing of automotive products, with the pivotal prerequisite being the selection of automobile sounds based on the driver’s subjective perception. The subjective responses of diverse individuals to sounds can be objectively manifested through EEG signals. Therefore, EEG signals are employed herein to attain the recognition of automobile sounds. A subjective evaluation and EEG signal acquisition experiment are designed involving the stimulation of three distinct types of automobile sounds, namely comfort, power, and technology sounds, and a comprehensive database of EEG signals corresponding to these three sound qualities is established. Then, a specific transfer learning model based on a convolutional neural network (STL-CNN) is formulated, where the method of training the upper layer parameters with the fixed bottom weights is proposed to adaptively extract the EEG features related to automobile sounds. These improvements contribute to improving the generalization ability of the model and realizing the recognition of automobile sounds fused with EEG signals. The results of the comparison with traditional support vector machine (SVM) and convolutional neural network (CNN) models demonstrate that the accuracy of the test set of the STL-CNN model reaches 91.5%. Moreover, its comprehensive performance, coupled with the ability to adapt to individual differences, surpasses that of both SVM and CNN models. The demonstrated method in the recognition of automobile sounds based on EEG signals is of significance for the future implementation of switching driving sound modes fused with EEG signals.

Full article

Figure 1

Open AccessArticle

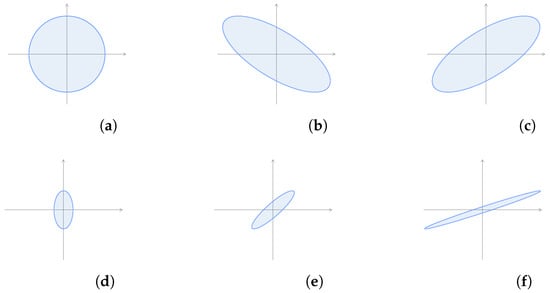

Shearlet Transform Applied to a Prostate Cancer Radiomics Analysis on MR Images

by

Rosario Corso, Alessandro Stefano, Giuseppe Salvaggio and Albert Comelli

Mathematics 2024, 12(9), 1296; https://doi.org/10.3390/math12091296 - 25 Apr 2024

Abstract

For decades, wavelet theory has attracted interest in several fields in dealing with signals. Nowadays, it is acknowledged that it is not very suitable to face aspects of multidimensional data like singularities and this has led to the development of other mathematical tools.

[...] Read more.

For decades, wavelet theory has attracted interest in several fields in dealing with signals. Nowadays, it is acknowledged that it is not very suitable to face aspects of multidimensional data like singularities and this has led to the development of other mathematical tools. A recent application of wavelet theory is in radiomics, an emerging field aiming to improve diagnostic, prognostic and predictive analysis of various cancer types through the analysis of features extracted from medical images. In this paper, for a radiomics study of prostate cancer with magnetic resonance (MR) images, we apply a similar but more sophisticated tool, namely the shearlet transform which, in contrast to the wavelet transform, allows us to examine variations along more orientations. In particular, we conduct a parallel radiomics analysis based on the two different transformations and highlight a better performance (evaluated in terms of statistical measures) in the use of the shearlet transform (in absolute value). The results achieved suggest taking the shearlet transform into consideration for radiomics studies in other contexts.

Full article

(This article belongs to the Special Issue Artificial Intelligence for Biomedical Image Processing and Data Analysis)

►▼

Show Figures

Figure 1

Open AccessReview

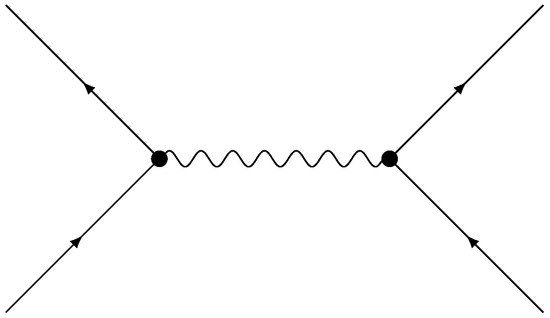

Feynman Diagrams beyond Physics: From Biology to Economy

by

Nicolò Cangiotti

Mathematics 2024, 12(9), 1295; https://doi.org/10.3390/math12091295 - 25 Apr 2024

Abstract

Feynman diagrams represent one of the most powerful and fascinating tools developed in theoretical physics in the last century. Introduced within the framework of quantum electrodynamics as a suitable method for computing the amplitude of a physical process, they rapidly became a fundamental

[...] Read more.

Feynman diagrams represent one of the most powerful and fascinating tools developed in theoretical physics in the last century. Introduced within the framework of quantum electrodynamics as a suitable method for computing the amplitude of a physical process, they rapidly became a fundamental mathematical object in quantum field theory. However, their abstract nature seems to suggest a wider usage, which actually exceeds the physical context. Indeed, as mathematical objects, they could simply be considered graphs that depict not only physical quantities but also biological or economic entities. We survey the analytical and algebraic properties of such diagrams to understand their utility in several areas of science, eventually providing some examples of recent applications.

Full article

(This article belongs to the Special Issue Algebraic Structures and Graph Theory, 2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

Improvement of Distributed Denial of Service Attack Detection through Machine Learning and Data Processing

by

Fray L. Becerra-Suarez, Ismael Fernández-Roman and Manuel G. Forero

Mathematics 2024, 12(9), 1294; https://doi.org/10.3390/math12091294 - 25 Apr 2024

Abstract

The early and accurate detection of Distributed Denial of Service (DDoS) attacks is a fundamental area of research to safeguard the integrity and functionality of organizations’ digital ecosystems. Despite the growing importance of neural networks in recent years, the use of classical techniques

[...] Read more.

The early and accurate detection of Distributed Denial of Service (DDoS) attacks is a fundamental area of research to safeguard the integrity and functionality of organizations’ digital ecosystems. Despite the growing importance of neural networks in recent years, the use of classical techniques remains relevant due to their interpretability, speed, resource efficiency, and satisfactory performance. This article presents the results of a comparative analysis of six machine learning techniques, namely, Random Forest (RF), Decision Tree (DT), AdaBoost (ADA), Extreme Gradient Boosting (XGB), Multilayer Perceptron (MLP), and Dense Neural Network (DNN), for classifying DDoS attacks. The CICDDoS2019 dataset was used, which underwent data preprocessing to remove outliers, and 22 features were selected using the Pearson correlation coefficient. The RF classifier achieved the best accuracy rate (99.97%), outperforming other classifiers and even previously published neural network-based techniques. These findings underscore the feasibility and effectiveness of machine learning algorithms in the field of DDoS attack detection, reaffirming their relevance as a valuable tool in advanced cyber defense.

Full article

(This article belongs to the Special Issue Network Security in Artificial Intelligence Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Comparisons of Numerical and Solitary Wave Solutions for the Stochastic Reaction–Diffusion Biofilm Model including Quorum Sensing

by

Muhammad Zafarullah Baber, Nauman Ahmed, Muhammad Waqas Yasin, Muhammad Sajid Iqbal, Ali Akgül, Alicia Cordero and Juan R. Torregrosa

Mathematics 2024, 12(9), 1293; https://doi.org/10.3390/math12091293 - 24 Apr 2024

Abstract

This study deals with a stochastic reaction–diffusion biofilm model under quorum sensing. Quorum sensing is a process of communication between cells that permits bacterial communication about cell density and alterations in gene expression. This model produces two results: the bacterial concentration, which over

[...] Read more.

This study deals with a stochastic reaction–diffusion biofilm model under quorum sensing. Quorum sensing is a process of communication between cells that permits bacterial communication about cell density and alterations in gene expression. This model produces two results: the bacterial concentration, which over time demonstrates the development and decomposition of the biofilm, and the biofilm bacteria collaboration, which demonstrates the potency of resistance and defense against environmental stimuli. In this study, we investigate numerical solutions and exact solitary wave solutions with the presence of randomness. The finite difference scheme is proposed for the sake of numerical solutions while the generalized Riccati equation mapping method is applied to construct exact solitary wave solutions. The numerical scheme is analyzed by checking consistency and stability. The consistency of the scheme is gained under the mean square sense while the stability condition is gained by the help of the Von Neumann criteria. Exact stochastic solitary wave solutions are constructed in the form of hyperbolic, trigonometric, and rational forms. Some solutions are plots in 3D and 2D form to show dark, bright and solitary wave solutions and the effects of noise as well. Mainly, the numerical results are compared with the exact solitary wave solutions with the help of unique physical problems. The comparison plots are dispatched in three dimensions and line representations as well as by selecting different values of parameters.

Full article

Open AccessArticle

Intelligent Low-Consumption Optimization Strategies: Economic Operation of Hydropower Stations Based on Improved LSTM and Random Forest Machine Learning Algorithm

by

Hong Pan, Jie Yang, Yang Yu, Yuan Zheng, Xiaonan Zheng and Chenyang Hang

Mathematics 2024, 12(9), 1292; https://doi.org/10.3390/math12091292 - 24 Apr 2024

Abstract

The economic operation of hydropower stations has the potential to increase water use efficiency. However, there are some challenges, such as the fixed and unchangeable flow characteristic curve of the hydraulic turbines, and the large number of variables in optimal load distribution, which

[...] Read more.

The economic operation of hydropower stations has the potential to increase water use efficiency. However, there are some challenges, such as the fixed and unchangeable flow characteristic curve of the hydraulic turbines, and the large number of variables in optimal load distribution, which limit the progress of research. In this paper, we propose a new optimal method of the economic operation of hydropower stations based on improved Long Short-Term Memory neural network (I-LSTM) and Random Forest (RF) algorithm. Firstly, in order to accurately estimate the water consumption, the LSTM model’s hyperparameters are optimized using improved particle swarm optimization, and the I-LSTM method is proposed to fit the flow characteristic curve of the hydraulic turbines. Secondly, the Random Forest machine learning algorithm is introduced to establish a load-distribution model with its powerful feature extraction and learning ability. To improve the accuracy of the load-distribution model, we use the K-means algorithm to cluster the historical data and optimize the parameters of the Random Forest model. A Hydropower Station in China is selected for a case study. It is shown that (1) the I-LSTM method fits the operating characteristics under various working conditions and actual operating characteristics of hydraulic turbines, ensuring that they are closest to the actual operating state; (2) the I-LSTM method is compared with Support Vector Machine (SVM), Extreme Learning Machine (ELM) and Long Short-Term Memory neural network (LSTM). The prediction results of SVM have a large error, but compared with ELM and LSTM, MSE is reduced by about 46% and 38% respectively. MAE is reduced by about 25% and 21%, respectively. RMSE is reduced by about 27% and 24%, respectively; (3) the RF algorithm performs better than the traditional dynamic programming algorithm in load distribution. With the passage of time and the increase in training samples, the prediction accuracy of the Random Forest model has steadily improved, which helps to achieve optimal operation of the units, reducing their average total water consumption by 1.24%. This study provides strong support for the application of intelligent low-consumption optimization strategies in hydropower fields, which can bring higher economic benefits and resource savings to renewable energy production.

Full article

(This article belongs to the Special Issue Computational Methods and Applications for Numerical Analysis, 2nd Edition)

Open AccessArticle

Multi-Objective Portfolio Optimization Using a Quantum Annealer

by

Esteban Aguilera , Jins de Jong , Frank Phillipson, Skander Taamallah and Mischa Vos

Mathematics 2024, 12(9), 1291; https://doi.org/10.3390/math12091291 - 24 Apr 2024

Abstract

In this study, the portfolio optimization problem is explored, using a combination of classical and quantum computing techniques. The portfolio optimization problem with specific objectives or constraints is often a quadratic optimization problem, due to the quadratic nature of, for example, risk measures.

[...] Read more.

In this study, the portfolio optimization problem is explored, using a combination of classical and quantum computing techniques. The portfolio optimization problem with specific objectives or constraints is often a quadratic optimization problem, due to the quadratic nature of, for example, risk measures. Quantum computing is a promising solution for quadratic optimization problems, as it can leverage quantum annealing and quantum approximate optimization algorithms, which are expected to tackle these problems more efficiently. Quantum computing takes advantage of quantum phenomena like superposition and entanglement. In this paper, a specific problem is introduced, where a portfolio of loans need to be optimized for 2030, considering `Return on Capital’ and `Concentration Risk’ objectives, as well as a carbon footprint constraint. This paper introduces the formulation of the problem and how it can be optimized using quantum computing, using a reformulation of the problem as a quadratic unconstrained binary optimization (QUBO) problem. Two QUBO formulations are presented, each addressing different aspects of the problem. The QUBO formulation succeeded in finding solutions that met the emission constraint, although classical simulated annealing still outperformed quantum annealing in solving this QUBO, in terms of solutions close to the Pareto frontier. Overall, this paper provides insights into how quantum computing can address complex optimization problems in the financial sector. It also highlights the potential of quantum computing for providing more efficient and robust solutions for portfolio management.

Full article

(This article belongs to the Section Mathematics and Computer Science)

Open AccessArticle

Traffic Prediction with Self-Supervised Learning: A Heterogeneity-Aware Model for Urban Traffic Flow Prediction Based on Self-Supervised Learning

by

Min Gao, Yingmei Wei, Yuxiang Xie and Yitong Zhang

Mathematics 2024, 12(9), 1290; https://doi.org/10.3390/math12091290 - 24 Apr 2024

Abstract

Accurate traffic prediction is pivotal when constructing intelligent cities to enhance urban mobility and to efficiently manage traffic flows. Traditional deep learning-based traffic prediction models primarily focus on capturing spatial and temporal dependencies, thus overlooking the existence of spatial and temporal heterogeneities. Heterogeneity

[...] Read more.

Accurate traffic prediction is pivotal when constructing intelligent cities to enhance urban mobility and to efficiently manage traffic flows. Traditional deep learning-based traffic prediction models primarily focus on capturing spatial and temporal dependencies, thus overlooking the existence of spatial and temporal heterogeneities. Heterogeneity is a crucial inherent characteristic of traffic data for the practical applications of traffic prediction. Spatial heterogeneities refer to the differences in traffic patterns across different regions, e.g., variations in traffic flow between office and commercial areas. Temporal heterogeneities refer to the changes in traffic patterns across different time steps, e.g., from morning to evening. Although existing models attempt to capture heterogeneities through predefined handcrafted features, multiple sets of parameters, and the fusion of spatial–temporal graphs, there are still some limitations. We propose a self-supervised learning-based traffic prediction framework called Traffic Prediction with Self-Supervised Learning (TPSSL) to address this issue. This framework leverages a spatial–temporal encoder for the prediction task and introduces adaptive data masking to enhance the robustness of the model against noise disturbances. Moreover, we introduce two auxiliary self-supervised learning paradigms to capture spatial heterogeneities and temporal heterogeneities, which also enrich the embeddings of the primary prediction task. We conduct experiments on four widely used traffic flow datasets, and the results demonstrate that TPSSL achieves state-of-the-art performance in traffic prediction tasks.

Full article

Open AccessArticle

Genetic Algorithms Application for Pricing Optimization in Commodity Markets

by

Yiyu Li, Qingjie Xu, Ying Wang and Bin Liu

Mathematics 2024, 12(9), 1289; https://doi.org/10.3390/math12091289 - 24 Apr 2024

Abstract

The perishable nature of vegetable commodities poses challenges for superstores, as reselling them is often unfeasible due to their short freshness period. Reliable market demand analysis is crucial for boosting revenue. This study simplifies the pricing and replenishment decision-making process by making reasonable

[...] Read more.

The perishable nature of vegetable commodities poses challenges for superstores, as reselling them is often unfeasible due to their short freshness period. Reliable market demand analysis is crucial for boosting revenue. This study simplifies the pricing and replenishment decision-making process by making reasonable assumptions about the selling time, wastage rate, and replenishment time for vegetable commodities. A single-objective planning model with the objective of profit maximization was constructed by fitting historical data using the nonparametric method of support vector regression (SVR). The study reveals a specific relationship between sales volume and cost-plus pricing for each category and predicts future cost changes using an LSTM model. Combining these findings, we substitute the relationship between sales volume and pricing as well as the LSTM prediction data into the model, and solve it using genetic algorithms in machine learning to derive the optimal replenishment volume and pricing strategy. Practical results show that the method can provide reasonable pricing and replenishment strategies for vegetable superstores, and after careful accounting, we arrive at an expected profit of RMB 22,703.14. The actual profit of the supermarket was RMB 19,732.89. The method, therefore, increases the profit of the vegetable superstore by 13.08%. By optimizing inventory management and pricing decisions, the superstore can better meet the challenges of vegetable commodities and achieve sustainable development.

Full article

(This article belongs to the Special Issue Mathematical Modeling and Machine Learning with Application to Economics and Finance)

►▼

Show Figures

Figure 1

Open AccessArticle

Local C0,1-Regularity for the Parabolic p-Laplacian Equation on the Group SU(3)

by

Yongming He, Chengwei Yu and Hongqing Wang

Mathematics 2024, 12(9), 1288; https://doi.org/10.3390/math12091288 - 24 Apr 2024

Abstract

In this article, when

In this article, when

(This article belongs to the Special Issue Research on Dynamical Systems and Differential Equations)

Open AccessArticle

A Weighted Skew-Logistic Distribution with Applications to Environmental Data

by

Isaac Cortés, Jimmy Reyes and Yuri A. Iriarte

Mathematics 2024, 12(9), 1287; https://doi.org/10.3390/math12091287 - 24 Apr 2024

Abstract

Skewness and bimodality properties are frequently observed when analyzing environmental data such as wind speeds, precipitation levels, and ambient temperatures. As an alternative to modeling data exhibiting these properties, we propose a flexible extension of the skew-logistic distribution. The proposal corresponds to a

[...] Read more.

Skewness and bimodality properties are frequently observed when analyzing environmental data such as wind speeds, precipitation levels, and ambient temperatures. As an alternative to modeling data exhibiting these properties, we propose a flexible extension of the skew-logistic distribution. The proposal corresponds to a weighted version of the skewed logistic distribution, defined by a parametric weight function that allows shapes with up to three modes for the resulting density. Parameter estimation via the maximum likelihood approach is discussed. Simulation experiments are carried out to evaluate the performance of the estimators. Applications to environmental data illustrating the utility of the proposal are presented.

Full article

(This article belongs to the Section Probability and Statistics)

Open AccessArticle

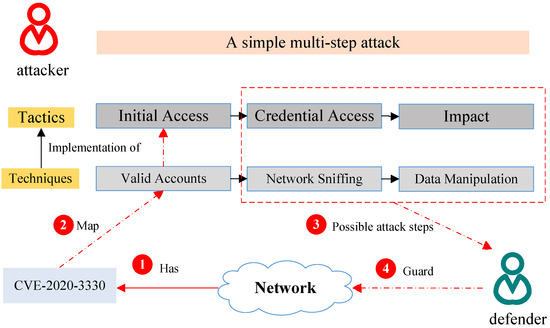

VTT-LLM: Advancing Vulnerability-to-Tactic-and-Technique Mapping through Fine-Tuning of Large Language Model

by

Chenhui Zhang, Le Wang, Dunqiu Fan, Junyi Zhu, Tang Zhou, Liyi Zeng and Zhaohua Li

Mathematics 2024, 12(9), 1286; https://doi.org/10.3390/math12091286 - 24 Apr 2024

Abstract

Vulnerabilities are often accompanied by cyberattacks. CVE is the largest repository of open vulnerabilities, which keeps expanding. ATT&CK models known multi-step attacks both tactically and technically and remains up to date. It is valuable to correlate the vulnerability in CVE with the corresponding

[...] Read more.

Vulnerabilities are often accompanied by cyberattacks. CVE is the largest repository of open vulnerabilities, which keeps expanding. ATT&CK models known multi-step attacks both tactically and technically and remains up to date. It is valuable to correlate the vulnerability in CVE with the corresponding tactic and technique of ATT&CK which exploit the vulnerability, for active defense. Mappings manually is not only time-consuming but also difficult to keep up-to-date. Existing language-based automated mapping methods do not utilize the information associated with attack behaviors outside of CVE and ATT&CK and are therefore ineffective. In this paper, we propose a novel framework named VTT-LLM for mapping Vulnerabilities to Tactics and Techniques based on Large Language Models, which consists of a generation model and a mapping model. In order to generate fine-tuning instructions for LLM, we create a template to extract knowledge of CWE (a standardized list of common weaknesses) and CAPEC (a standardized list of common attack patterns). We train the generation model of VTT-LLM by fine-tuning the LLM according to the above instructions. The generation model correlates vulnerability and attack through their descriptions. The mapping model transforms the descriptions of ATT&CK tactics and techniques into vectors through text embedding and further associates them with attacks through semantic matching. By leveraging the knowledge of CWE and CAPEC, VTT-LLM can eventually automate the process of linking vulnerabilities in CVE to the attack techniques and tactics of ATT&CK. Experiments on the latest public dataset, ChatGPT-VDMEval, show the effectiveness of VTT-LLM with an accuracy of 85.18%, which is 13.69% and 54.42% higher than the existing CVET and ChatGPT-based methods, respectively. In addition, compared to fine-tuning without outside knowledge, the accuracy of VTT-LLM with chain fine-tuning is 9.24% higher on average across different LLMs.

Full article

(This article belongs to the Special Issue Advanced Research on Information System Security and Privacy)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- Mathematics Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Sections & Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Society Collaborations

- Conferences

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Axioms, Computation, MCA, Mathematics, Symmetry

Mathematical Modeling

Topic Editors: Babak Shiri, Zahra AlijaniDeadline: 31 May 2024

Topic in

Algorithms, Axioms, Fractal Fract, Mathematics, Symmetry

Fractal and Design of Multipoint Iterative Methods for Nonlinear Problems

Topic Editors: Xiaofeng Wang, Fazlollah SoleymaniDeadline: 30 June 2024

Topic in

Algorithms, Computation, Information, Mathematics

Complex Networks and Social Networks

Topic Editors: Jie Meng, Xiaowei Huang, Minghui Qian, Zhixuan XuDeadline: 31 July 2024

Topic in

Algorithms, Future Internet, Information, Mathematics, Symmetry

Research on Data Mining of Electronic Health Records Using Deep Learning Methods

Topic Editors: Dawei Yang, Yu Zhu, Hongyi XinDeadline: 31 August 2024

Conferences

Special Issues

Special Issue in

Mathematics

Advances in the Mathematics of Ecological Modelling

Guest Editors: Dmitrii O. Logofet, Larisa Khanina, Pavel GrabarnikDeadline: 30 April 2024

Special Issue in

Mathematics

Advances in Linear Recurrence System

Guest Editors: Lorentz Jäntschi, Virginia NiculescuDeadline: 15 May 2024

Special Issue in

Mathematics

New Trends on Boundary Value Problems

Guest Editors: Miklós Rontó, András Rontó, Nino Partsvania, Bedřich Půža, Hriczó KrisztiánDeadline: 31 May 2024

Special Issue in

Mathematics

Applications of Fuzzy Modeling in Risk Management

Guest Editors: Edit Toth-Laufer, László PokorádiDeadline: 20 June 2024

Topical Collections

Topical Collection in

Mathematics

Topology and Foundations

Collection Editors: Lorentz Jäntschi, Dušanka Janežič

Topical Collection in

Mathematics

Multiscale Computation and Machine Learning

Collection Editors: Yalchin Efendiev, Eric Chung

Topical Collection in

Mathematics

Theoretical and Mathematical Ecology

Collection Editor: Yuri V. Tyutyunov